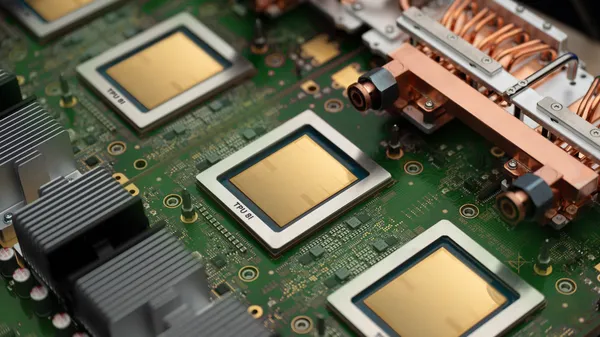

Google’s been cooking up TPUs for years, and their eighth generation just landed. But instead of a single monolithic chip, they’re splitting the workload across two specialized variants. I’ve been watching their TPU evolution since the first one hit the lab, and this is the most interesting pivot yet.

One chip is built for reasoning and planning — the kind of deep, multi-step thinking that agentic AI needs to figure out what to do next. The other is optimized for execution: taking actions, calling APIs, moving data around. It’s a classic split between brain and brawn, but applied to the AI stack in a way I haven’t seen done this deliberately before.

Let’s be real: LLMs are great at generating text, but agents need to actually do things. They need to parse a user’s intent, break it down into steps, query databases, trigger workflows, and then synthesize results. That’s a fundamentally different compute profile than just running inference on a prompt.

The reasoning chip is beefy on memory bandwidth and matrix math — think massive parallel tensor operations with low latency between layers. It’s built for the chain-of-thought stuff that makes modern agents actually useful. The execution chip, by contrast, has faster I/O and tighter integration with Google’s own infrastructure (Cloud Run, BigQuery, you name it). It’s meant to be the muscle that carries out the plan.

What I find smart about this is that it mirrors how actual software systems work. You don’t use the same server for your API gateway and your database — they have different bottlenecks. Google is essentially admitting that AI workloads have the same heterogeneity, and they’re finally building silicon that reflects that.

Of course, this only matters if developers actually use both chips in tandem. Google’s pitching this as a seamless integration through their Vertex AI platform, where the orchestrator decides which chip handles which part of a request. If it works as advertised, it could slash latency for complex agent tasks — but I’ve seen enough “seamless” integrations fall apart in production to stay skeptical.

The bigger picture: this is Google betting that agentic AI isn’t just a passing hype cycle. They’re investing in hardware specifically for autonomous workflows, not just chat completions. Whether that pays off depends on how many real-world agent deployments materialize in the next 12 months. But I’ll give them credit for thinking beyond the standard GPU-playbook.

I’d love to see benchmarks comparing these TPUs against NVIDIA’s H100s or AMD’s MI300X for agentic workloads. Google’s been cagey about raw numbers so far. My gut says the execution chip will shine in latency-sensitive tasks, while the reasoning chip might struggle against general-purpose GPUs that can be repurposed more flexibly.

Either way, this is a clear signal: the future of AI hardware isn’t just about bigger models. It’s about smarter division of labor between components. And Google just drew the first line in the sand.

Comments (0)

Login Log in to comment.

Be the first to comment!